Test of Altaro Backup v4 for Hyper-V

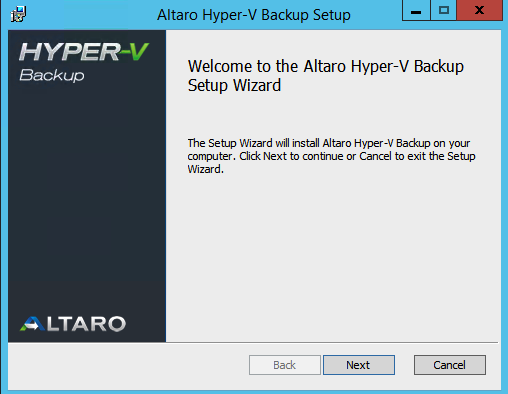

There are some backup vendors that nowadays have support for Hyper-V and host level backup. I have been testing some of them but also wanted to check how the Altaro Hyper-V Backup solution works.

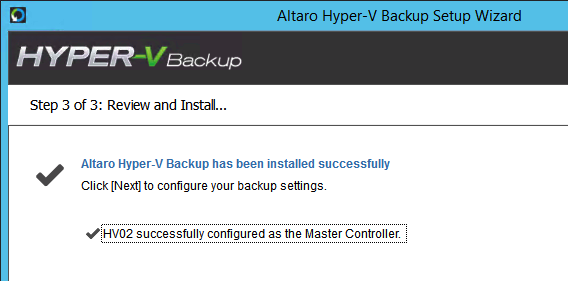

I cannot complain about the ease of installing and getting started on the Altaro solution. I like the install wizard that directly recognises if I am trying to install on a cluster node or a single hyper-v instance.

It is fully supported to install on a core or full version of Hyper-V 2012 and also 2012 R2.

When I tested to install on my one-node Hyper-V 2012 R2 cluster it found and promoted the node as a master controller.

It was really easy to configure the VM´s that should be included in the backup and then schedule a backup job.

Some really nice features in the Altaro are:

- Offsite backup with wan acceleration

- Exchange Item Level restore

- Remote Management Console

- Fast Hyper-V backup/restore

- Compression/Encryption

- Live Backup of Linux VM´s

- Instant-Boot from backup

If you register then you get a trial that works in 30 days and have the full feature-set. You can also download the free version and that gives you the possibility to do backups on two VM´s forever 🙂 but with some limitations..