Azure Certification guide: AZ-103 / AZ-104

Now the time has come for a guide on how to succeed in the Azure Administrator Associate exam

I managed to succeed this one on Ignite 2019 where the possibility to do exams the whole week free! There was though based on the amount of attendees a limit of one (1) per person.

Skills measured:

- Manage Azure subscriptions and resources (15-20%)

- Implement and manage storage (15-20%)

- Deploy and manage virtual machines (VMs) (15-20%)

- Configure and manage virtual networks (30-35%)

- Manage identities (15-20%)

As you might see on the cert page there is a transition now happening and as the content have changed quite a bit there is a good idea to do the AZ-104 instead to be future proof!

Learning resources:

I used some different resources for preparing me, I have already the 70-533 in the bagage so I did have some understanding of the administrator exams..

First of all the Microsoft learn have a learning path that covers a great deal of the required skills.

Secondly I used a book from Packt written by Sjoukje Zaal that was very good and got me even more prepared.

The book goes through the different skills required in a structured way and helps in the learning process.

What you learn from the book:

- Configure Azure subscription policies and manage resource groups

- Monitor activity log by using Log Analytics

- Modify and deploy Azure Resource Manager (ARM) templates

- Protect your data with Azure Site Recovery

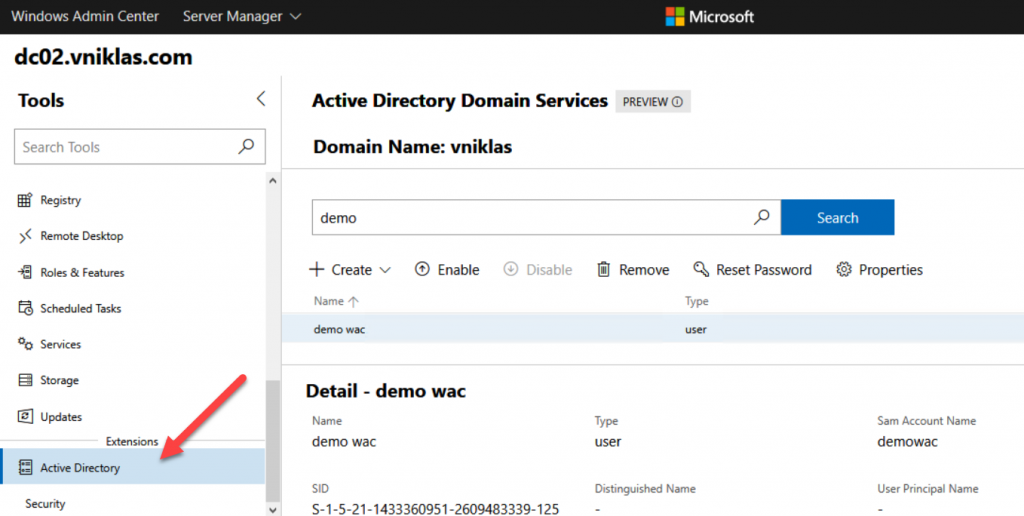

- Learn how to manage identities in Azure

- Monitor and troubleshoot virtual network connectivity

- Manage Azure Active Directory Connect, password sync, and password writeback

Good luck in your preparations and hope you will be as successful as me!