Windows Server (2019) vNext LTSC build 17623 released

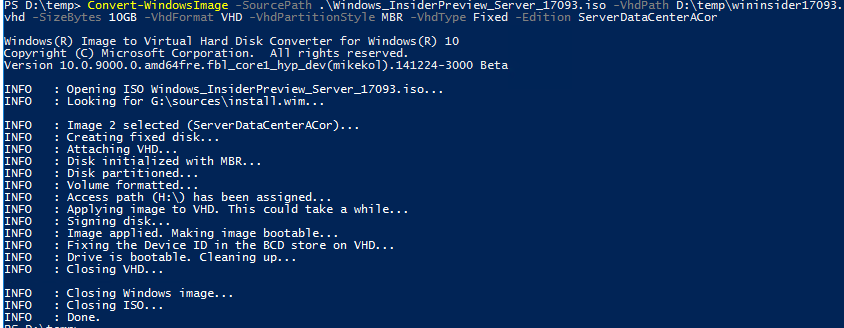

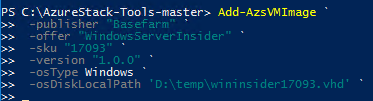

Today the preview version of vNext LTSC (Windows Server 2019) build has been released on Windows Server Insider and now you can download and test the features and system.

Some info from the tech community site:

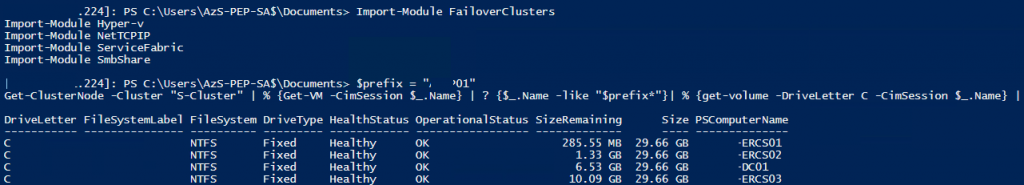

Extending your Clusters with Cluster Sets

“Cluster Sets” is the new cloud scale-out technology in this Preview release that increases cluster node count in a single SDDC (Software-Defined Data Center) cloud by orders of magnitude. A Cluster Set is a loosely-coupled grouping of multiple Failover Clusters: compute, storage or hyper-converged. Cluster Sets technology enables virtual machine fluidity across member clusters within a Cluster Set and a unified storage namespace across the “set” in support of virtual machine fluidity. While preserving existing Failover Cluster management experiences on member clusters, a Cluster Set instance additionally offers key use cases around lifecycle management of a Cluster Set at the aggregate.

Failover Cluster removing use of NTLM authentication

Windows Server Failover Clusters no longer use NTLM authentication by exclusively using Kerberos and certificate based authentication. There are no changes required by the user, or deployment tools, to take advantage of this security enhancement. It also allows failover clusters to be deployed in environments where NTLM has been disabled

Encrypted Network in SDN

Network traffic going out from a VM host can be snooped on and/or manipulated by anyone with access to the physical fabric. While shielded VMs protect VM data from theft and manipulation, similar protection is required for network traffic to and from a VM. While the tenant can setup protection such as IPSEC, this is difficult due to configuration complexity and heterogeneous environments.

Encrypted Networks is a feature which provides simple to configure DTLS-based encryption using the Network Controller to manage the end-to-end encryption and protect data as it travels through the wires and network devices between the hosts It is configured by the Administrator on a per-subnet basis. This enables the VM to VM traffic within the VM subnet to be automatically encrypted as it leaves the host and prevents snooping and manipulation of traffic on the wire. This is done without requiring any configuration changes in the VMs themselves.

Windows Defender Advanced Threat Protection

Windows Defender ATP Exploit Guard

If you have not signed up for the insiders do so now and start playing with this new release, I am in the works of upgrading my lab!