Schedule Hyper-V VM replication for non-office hours with PowerShell

If you have set up Hyper-V replica and are replicating your VM´s to a disaster site or maybe a branch office and that office might have a small WAN connection to the datacenter and you cannot get a faster connection from the ISP and for example you might want to stop the replication during the office hours and resume it on the night you can use the new Powershell version 3 feature scheduled jobs.

This will of course imply on your recovery when there was a disaster. But this can be compared to having an offsite DPM server that you sync to every 24 hours.

Anyway, if you want, you can enable a scheduled job that suspends and resumes a VM replication. I created earlier a blog post about setting up scheduled jobs. The following Cmdlets am I using in this case:

- Suspend-VMReplication

- Resume-VMReplication

A simple example, I have a VM that I am currently replicating and want it to be suspended during the day and then resumed when all my users have gone home and I have all bandwidth again.

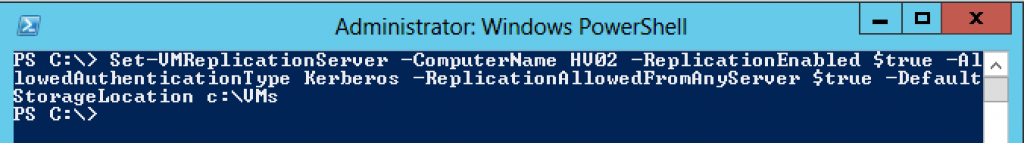

First I add a replication receiver host for my replicated servers

PS C:\> Set-VMReplicationServer -ComputerName HV02 -ReplicationEnabled $true -AllowedAuthenticationType Kerberos -ReplicationAllowedFromAnyServer $true -DefaultStorageLocation c:\VMs

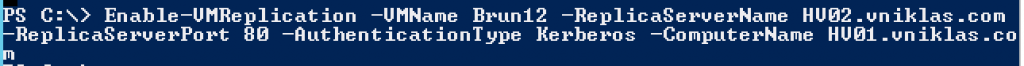

And then I need to set up the VM replication

PS C:\> Enable-VMReplication -VMName Brun12 -ReplicaServerName HV02.vniklas.com -ReplicaServerPort 80 -AuthenticationType Kerberos -ComputerName HV01.vniklas.com PS C:\> Start-VMInitialReplication -VMName brun12 -ComputerName HV01

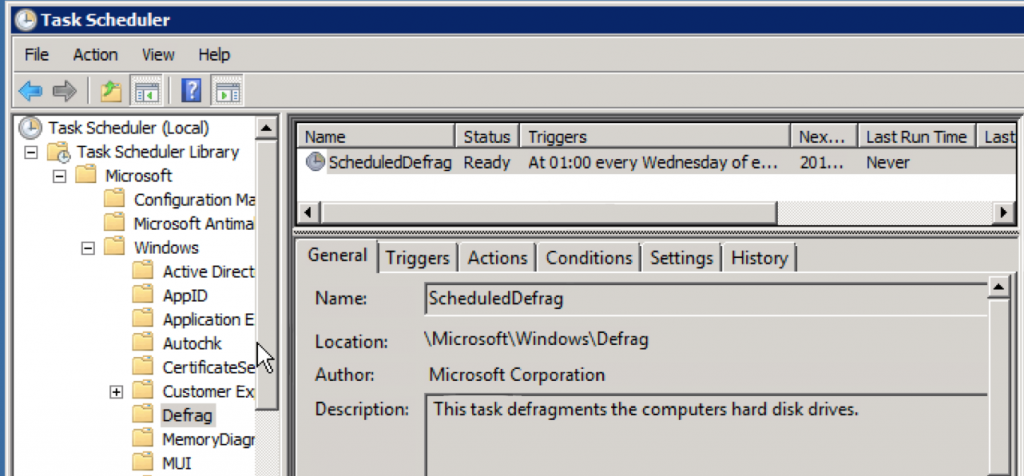

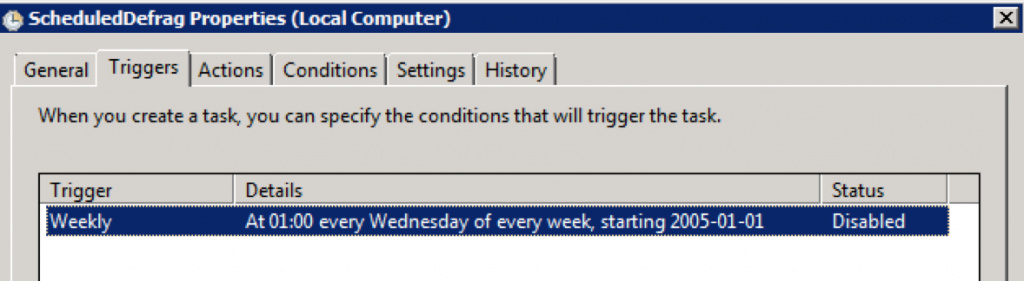

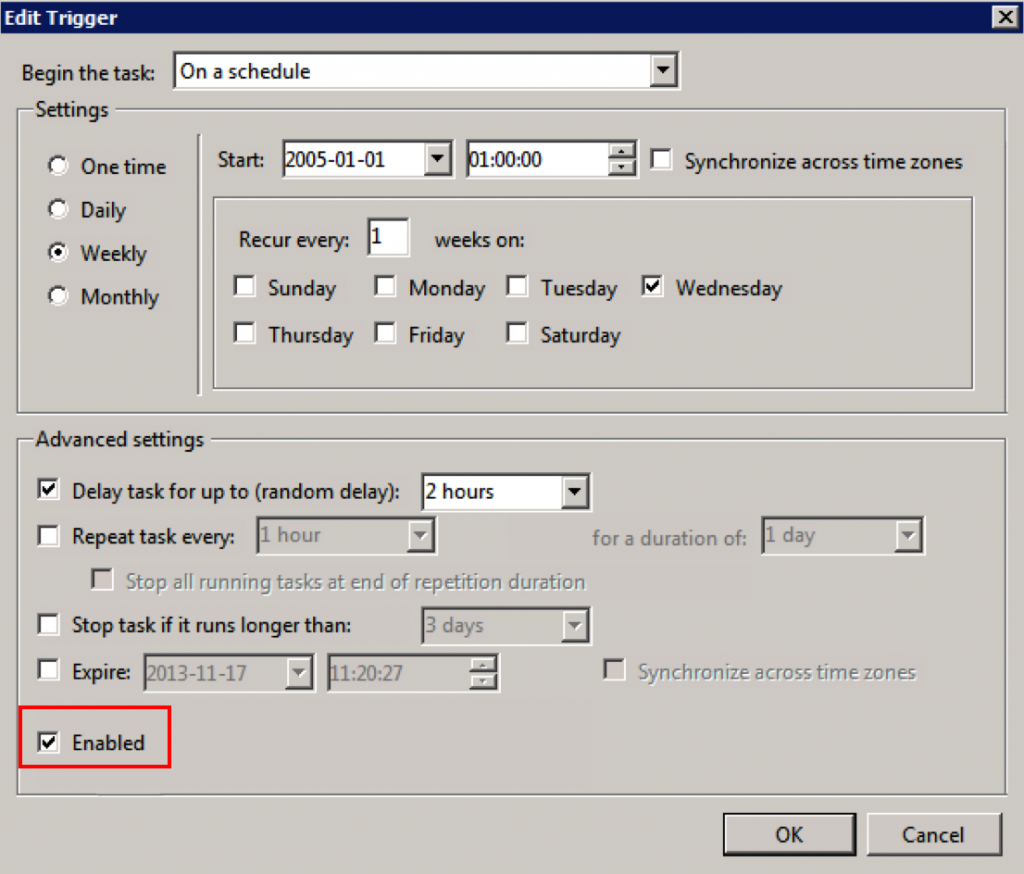

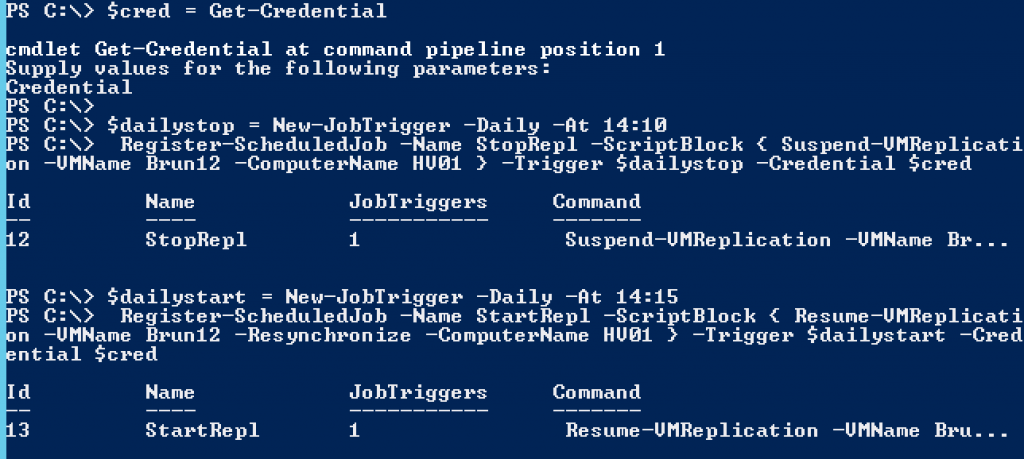

So How do I schedule then, as you can see on my screendumps, I have used other times for my scheduled jobs than you might want in your environment, you can also use other parameters than -Daily . Use Get-Help New-JobTrigger -full to get the help and there you can see all the options

PS C:\> $cred = Get-Credential

PS C:\> $dailystop = New-JobTrigger -Daily -At 14:10

PS C:\> Register-ScheduledJob -Name StopRepl -ScriptBlock { Suspend-VMReplication -VMName Brun12 -ComputerName HV01 } -Trigger $dailystop -Credential $cred

PS C:\> $dailystart = New-JobTrigger -Daily -At 14:15

PS C:\> Register-ScheduledJob -Name StartRepl -ScriptBlock { Resume-VMReplication -VMName Brun12 -Resynchronize -ComputerName HV01 } -Trigger $dailystart -Credential $cred

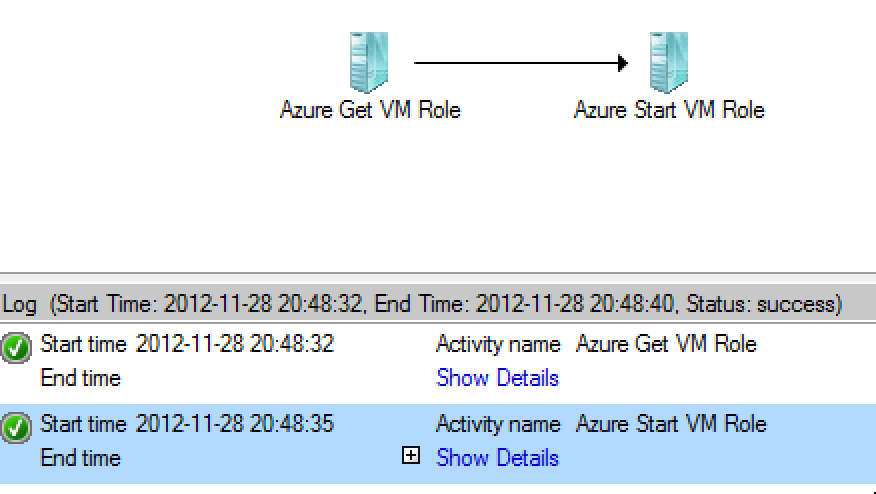

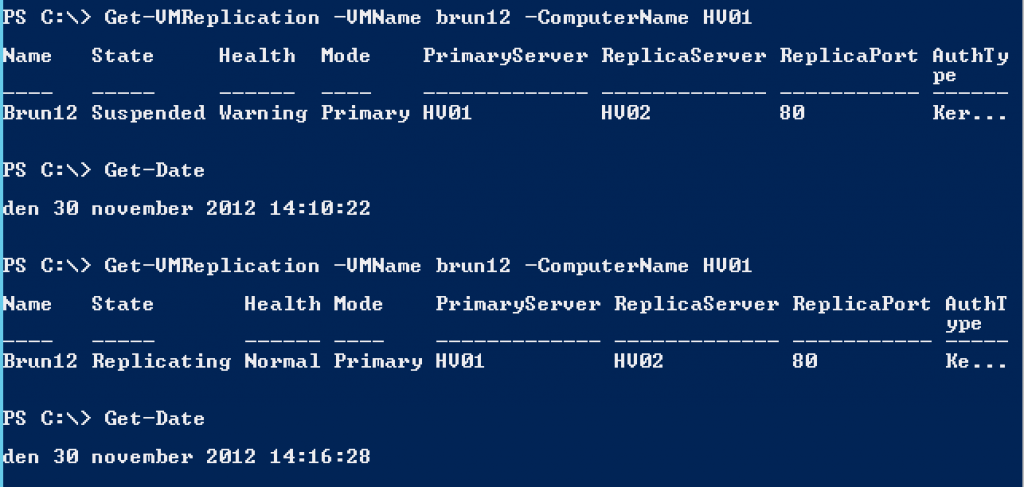

And here on this screendump you can see that it works,

Another minor detail, If you have a VM that changes lot of data on the virtual disks during the day, it will take a while for the resyncing after a longer suspension.