Announcing the Windows Server Summit 26 of June

On the 26 of june Microsoft will have a half of a day summit on Windows Server that you do not want to miss!

The agenda will have four different tracks

- Hybrid: We’ll cover how you can run Windows Server workloads both on-premises and in Azure, as well as show you how Azure services can be used to manage Windows Server workloads running in the cloud or on-premises.

- Security: We know security is top of mind for many of you and we have tons of great new and improved security features that we can’t wait to show and help you elevate your security posture.

- Application Platform: Containers are changing the way developers and operations teams run applications today. In this track we’ll share what’s new in Windows Server to support the modernization of applications running on-premises or in Azure.

- Hyper-convergent Infrastructure: This is the next big thing in IT and Windows Server 2019 brings amazing new capabilities building on Windows Server 2016. Join this track to learn how to bring your on-premises infrastructure to the next level.

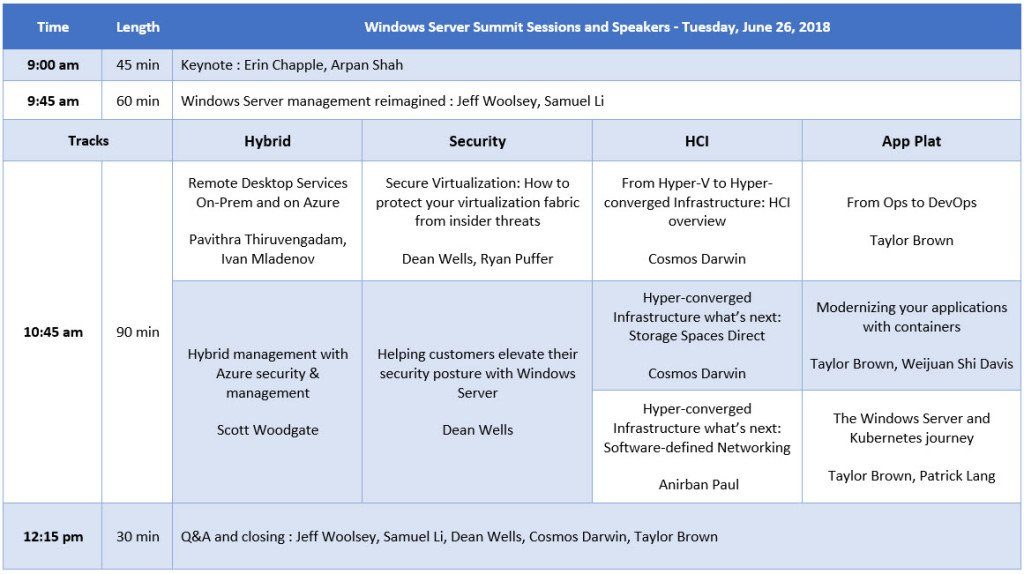

Agenda with times and speakers:

here you can find the link to the summit and download a reminder